How Does an Atomic Clock Work?

With an error of only 1 second in up to 100 million years, atomic clocks are among the most accurate timekeeping devices in history.

Caesium clocks in Braunschweig, Germany.

ptb.de

9,192,631,770 Oscillations

Atomic clocks are designed to measure the precise length of a second, the base unit of modern timekeeping. The International System of Units (SI) defines the second as the time it takes a caesium-133 atom in a precisely defined state to oscillate exactly:

9 billion, 192 million, 631 thousand, 770 times.

The official definition provides more detail: “The second is the duration of 9,192,631,770 periods of the radiation corresponding to the transition between the two hyperfine levels of the ground state of the caesium-133 atom. This definition refers to a caesium atom at rest at a temperature of 0 Kelvin.”

Planet Earth is an exceptionally accurate timekeeper

Working Principle of Atomic Clocks

In an atomic clock, the natural oscillations of atoms act like the pendulum in a grandfather clock. However, atomic clocks are far more precise than conventional clocks because atomic oscillations have a much higher frequency and are much more stable.

There are many different types of atomic clocks, but they generally share the same basic working principle, which is described below:

Heat, Bundle, and Sort

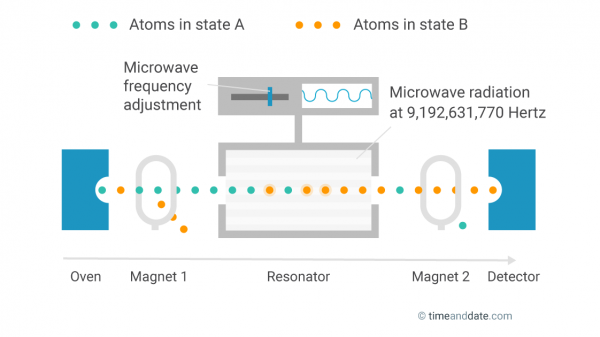

First, the atoms are heated in an oven and bundled into a beam. Each atom has one of two possible energy states. They are referred to as hyperfine levels, but let's call them state A and state B.

A magnetic field then removes all atoms in state B from the beam, so only atoms in state A remain.

The inner workings of an atomic clock.

Irridate and Count

The state-A atoms are sent through a resonator where they are subjected to microwave radiation, which triggers some of the atoms to change to state B. Behind the resonator, atoms that are still in state A are removed by a second magnetic field. A detector then counts all atoms that have changed to state B.

Tune and Measure

The percentage of atoms that change their state while passing through the resonator depends on the frequency of the microwave radiation. The more it is in sync with the inherent oscillation frequency of the atoms, the more atoms change their state.

The goal is to perfectly tune the microwave frequency to the oscillation of the atoms, and then measure it. After exactly 9,192,631,770 oscillations, a second has passed.

How Accurate Are Atomic Clocks?

The accuracy of atomic clocks varies and is constantly improving. With an expected error of only 1 second in about 100 million years, the NIST-F1 in Boulder, Colorado, is one of the world's most precise clocks.

It is called a caesium fountain clock where lasers concentrate the atoms into a cloud, cool them down, and then toss them upwards. This method slows the atoms down, allowing for a longer measurement period and a more precise approximation of the natural frequency of the atoms.

Optical Clocks

Scientists are currently developing a device that is even more accurate than the current atomic clocks. The optical atomic clock uses light in the visible spectrum to measure atomic oscillations. The resonance frequency of the light rays is about 50,000 times higher than that of microwave radiation, allowing for a more precise measurement. The expected deviation of the new optical clock is 1 second in 15 billion years.

Why Do We Need Atomic Clocks?

Some 400 atomic clocks around the world contribute to the calculation of International Atomic Time (TAI), one of the time standards used to determine Coordinated Universal Time (UTC) and local times around the world.

Satellite navigation systems like GPS, GLONASS, and Galileo also rely on precise time measurements to calculate positions accurately.